Infrastructure as Code and the Upside Down

“How many servers?!” I asked, and the Linux admin again confidently replied “300”. To be honest, the exact number escapes me now, but it was hundreds, and I absolutely remember the sinking feeling upon realising I had two hours to assess the security of a sizeable Linux server estate for a PCI DSS assessment of a large client in Germany. That’s how it was back then in the mid 2010s; you could inventory during scoping, identify configuration management solutions which might speed things up, but all said and done, you’d be lucky to have more than an hour or two to discuss the security processes and configurations with an admin.

Was it “tick box” (I hate that term)? No - I was diligent, as most QSAs I worked with were. I ensured I’d reviewed the configuration standard and build process beforehand and identified the configuration settings critical to the security of the system. My objective was ultimately to review whether the hardening process was being effectively applied and by sampling systems and settings, I could verify with a reasonable degree of confidence while identifying areas for improvement or control gaps. I had 7 yrs engineering experience, so I knew what good looked like but with so many systems and so many settings to check, was there room for improvement? Absolutely - assessors could insist on configuration management reports to provide a consolidated complete view, ask admins to run scripts or… ask for more time. More often than not, those options weren’t on the table though… mandating a client use a compliance tool? Good luck with that. Asking admins to run scripts in production environments? Sometimes possible but change control was usually the push back. Asking for more time? Well, if assessors had as much time as they wanted, they’d never win the contract in the first place - such are the commercial realities of assessments and audits.

The anecdote illustrates the practical challenges of auditing or assessing systems against security policies or standards. At the time this was simply the reality of infrastructure compliance. But today, the widespread use of Infrastructure as Code fundamentally changes what effective assurance looks like. Yes, many environments still have large numbers of systems but they can now be ephemeral or containerized – spinning in and out of existence multiple times during an auditor’s coffee break. Infrastructure is now deployed as code (e.g. Terraform HCL or AWS CloudFormation) which can be considered your authoritative infrastructure definition. I’ll admit first realising this blew my mind – I occasionally tried to explain it to non-technical friends at the time (working through their glazed looks of indifference).

The problem is this; in cloud environments, if you assess infrastructure (systems, networks, firewalls, databases etc) for any GRC reason (audit, risk assessment, control assessment or compliance reporting) following the approach of sampling infrastructure as described above, it will likely result in missing a significant area of risk. The reason – modern cloud infrastructure exists in at least two planes. No, not – the Upside Down and The Right Side Up but:

- The Data Plane – where workloads run and traffic flows.

- The Control Plane – manages the data plane through APIs and orchestration systems which create/configure resources.

When assessing infrastructure, if a GRC professional only focuses on the data plane, then they omit reviewing the source of truth for all cloud resources managed via IaC. It’s therefore far more reliable and efficient to assess cloud security infrastructure by reviewing IaC repositories as a starting point.

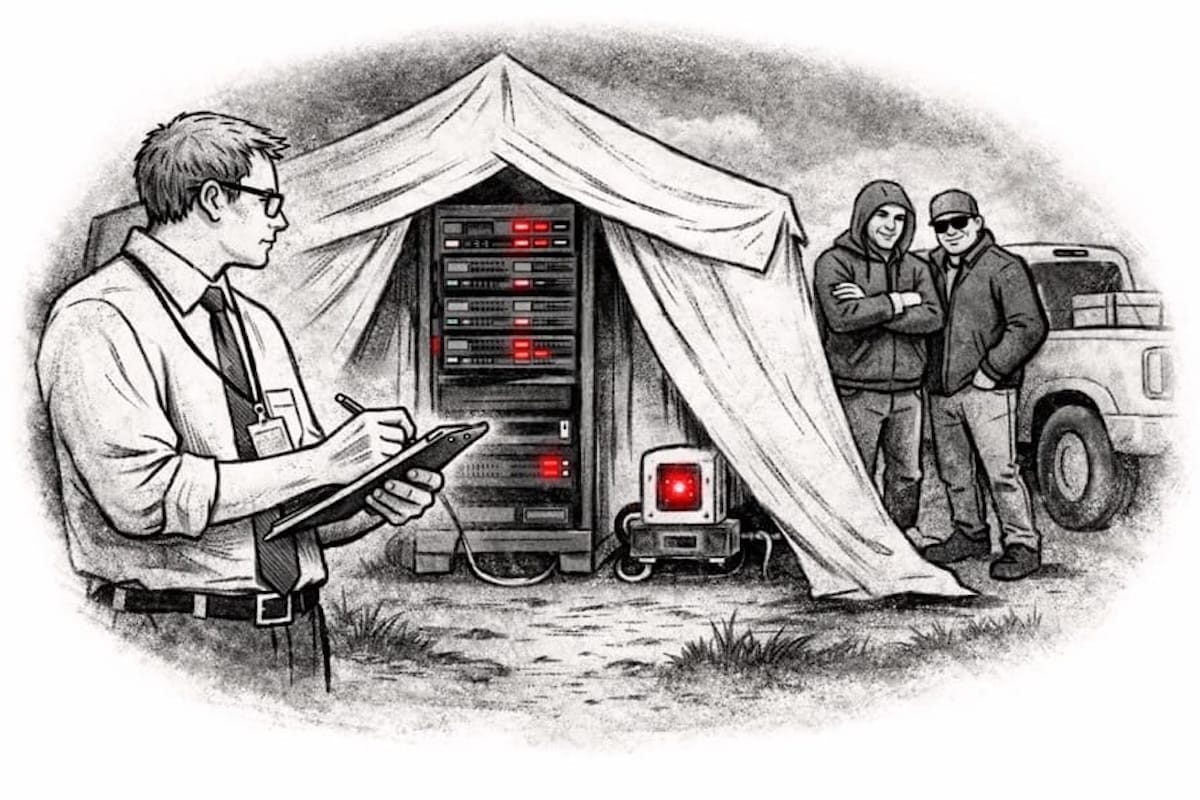

Worse than this, assessors may fail to review the security controls in the development CI/CD (Continuous Integration / Continuous Deployment) pipeline. For those unfamiliar with CI/CD pipelines, they are workflows defined in platforms like Github Actions, Gitlab CI or Jenkins which automate code testing, scanning and deployment. Omitting pipelines from a cloud security assessment can be compared to reviewing infrastructure controls for a server rack in a tent… Hardened systems? Check. Network access control? Check. Server rack on the back of a pick-up? Check. (I’m yet to read a breach report for that scenario but you never know!). If assessors aren’t reviewing the pipeline and supporting development processes, they are missing the entire security context the infrastructure controls depend upon - what gets deployed, who can deploy it and how it’s validated. In other words - cloud security can only ever be measured in the context of the development processes used to deploy cloud infrastructure.

The impact of this for assurance and to the business may be significant. What’s worse than poor security? A false sense of security. GRC teams may be reporting on the security of resources within cloud accounts while oblivious to an entire risk area. It’s possible, assessors or security teams may be oblivious to the fact that the security of their infrastructure is dependent on development processes at all. Is it any wonder the Cloud Security Alliance reported Misconfiguration and Change Management as a ‘Tier 1’ threat in its Top Threats to Cloud Computing – Deep Dive 2025 report. Despite this very few industry standards address this area of risk explicitly. The CIS Benchmarks for AWS and Azure focus on the state of the cloud environment. The CSA’s CCM has relevant controls (e.g. Change Control and Configuration Management) but doesn’t explicitly target IaC or CI/CD pipelines - introducing reliance on assessor knowledge and interpretation. Is this indicative of a lag between DevOps / DevSecOps and the GRC community? Perhaps but that may be a discussion in a follow up article.

Given the risk and the prevalence of real-world breaches resulting from cloud security misconfigurations what should GRC folks be doing in this space?

GRC Oversight

Firstly, coordinate with security architecture to establish GRC oversight for assessing controls and reporting on the risks associated with CI/CD pipelines. As mentioned, engineering and development teams tend to be more familiar with security issues relating to CI/CD pipelines and so may feel some ownership – operationally that makes sense but GRC teams may need to make the case for some level of oversight and risk reporting.

Cloud Inventory

Secondly, cloud accounts should be inventoried, and the inventory should include a deployment method category detailing whether the account is managed manually or whether infrastructure is deployed via an automated CI/CD pipeline.

Assessment

With a complete inventory, GRC teams will be better able to scope development processes for assessment. Assessors should be familiar with DevSecOps processes to ensure CI/CD pipelines are fully reviewed. They should be familiar with policy-as-code technologies (e.g. OPA and Conftest) which can automate security testing introducing true ‘shift left’ capability. In my experience standard development policies which simply mandate ‘code review’ or a ‘secure code repository’ without going into any greater depth, don’t cut it. If the organisation doesn’t have suitable policies or standards to provide a structure for assessments, then they should be developed referencing NIST SP 800-218.

Raising the Bar

Finally, if new controls must be implemented, GRC teams should coordinate with security engineering, architecture and development teams to agree them ensuring they don’t impede CI/CD deployment cadence.

Conclusion

The most important recommendation is a mindset shift - GRC teams must recognise that IaC shifts risk toward the control plane and CI/CD pipelines. I realised the power of IaC myself some time ago when I ran my first ‘Terraform Destroy’ observing the deletion of weeks’ worth of work in seconds. That was fine in a lab where I could rebuild it just as quickly, however if production credentials end up in the wrong hands, the scale of damage could be far greater than we’re accustomed to hearing about. Instead of a single server or database being compromised, it could be multiple platforms, with hundreds or thousands of servers and databases. The capability for widespread and long-lasting damage stands out to me so clearly and yet I see little GRC industry focus. The SolarWinds attack in 2020, while widely discussed as a supply chain compromise, was fundamentally enabled by a compromised CI/CD pipeline. Yet despite the attack taking place six years ago, this dimension receives little focus within the GRC community. If your GRC function hasn’t considered the security of CI/CD pipelines and IaC, then forward this article to colleagues and consider its recommendations to avoid being in your very own Upside Down.